.webp)

Trends

How to Calculate Your Sample Size Using a Sample Size Formula

.png)

.png)

Read More

.png)

.png)

.png)

Maria Noesi

November 25, 2021

.webp)

.webp)

.webp)

.webp)

.webp)

Can AI-Driven Research Steer Social Media’s Mental Health Crisis?

The amount of time we spend on social platforms keeps growing. And the experiences are increasingly sophisticated and immersive, making it harder for our brains to differentiate the real from the virtual. Because of this, it comes as no shock that these habitual platforms are affecting the mental health of those engaging with them.

The amount of time we spend on social platforms keeps growing. And the experiences are increasingly sophisticated and immersive, making it harder for our brains to differentiate the real from the virtual. Because of this, it comes as no shock that these habitual platforms are affecting the mental health of those engaging with them.

While research on these effects has been happening for a while, a recent leak of research conducted by Facebook has brought these studies into the spotlight.

Critics are using this as an opportunity to attack Facebook — highlighting negative findings like the 15% of teens who report Instagram as having a negative effect on their feelings of suicide. At the same time, defenders are pointing to positive findings like the 39% of teens who report Instagram as having a positive effect on their feelings of suicide. But regardless of which side you're on, there’s a bigger picture to see.

There are over 30 prominent social platforms with over 100 million active users, and a massive long tail of smaller platforms. With the Facebook whistleblower coming forward, we are all waking up to the scope of impact these platforms can have on our mental health — but so are the leaders who control these platforms’ future, and that represents an opportunity.

Decision-makers for the growing list of social platforms impacting our collective mental health have a choice to make: they can view these impacts as a liability, stick their head in the sand and ignore reality even try to hide the harmful effects from critics. Or they can lean into reality. Accept the fact that the impact is genuine, and own the responsibility to steer that impact in a healthy direction.

Historically, there's been a reasonable excuse to be the ostrich. Doing the research to understand and improve your platform's mental health impact was a massive effort — often prohibitively expensive, and so time-consuming that results weren’t relevant by the time you completed the research.

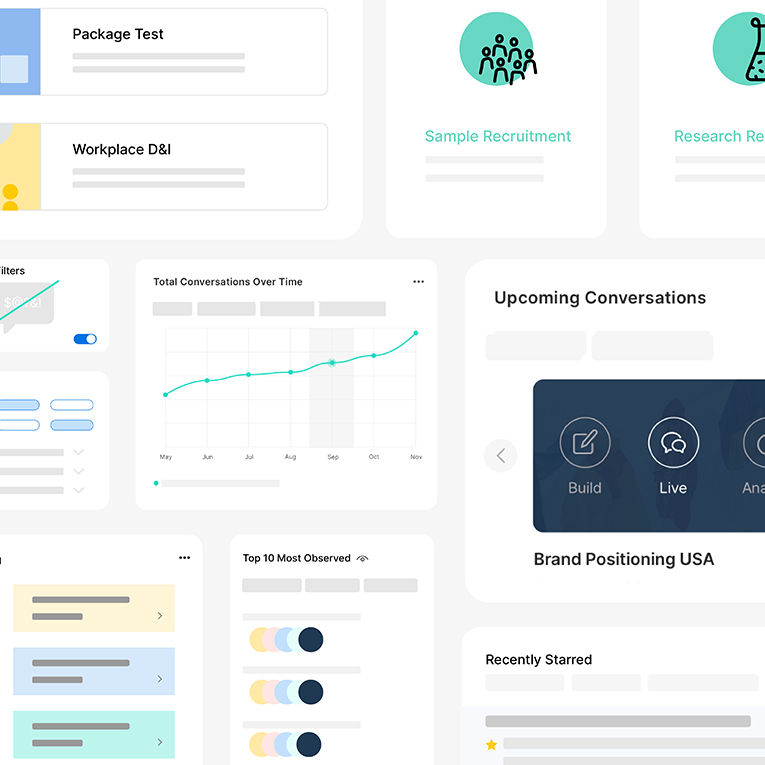

But that was then. Now, doing this kind of research has never been easier. A new breed of AI-driven research tools are making it faster, simpler, and more affordable. And they aren't just being used for brand trackers and concept tests anymore — they’re being used to understand even the most sensitive of issues. Take the UN incorporating these tools into their peacekeeping negotiations, for example.

So the excuse around difficulty is gone. Decision-makers can understand and steer their platform’s mental health impact. But will they? As the power dynamics of the internet invert, it seems safe to bet that the platforms which give users the future they want — like better mental health — will win in the long run.

If in the future we find ourselves in the metaverse, reflecting on this time in history, the platform owners who sought the truth and acted to make things better will be the heroes of this story. And it sure looks like some of them might already be working at Facebook.

While you're here, check out our guide on cutting-edge research methodology and the current state of the market research industry in 2021.

More

Remesh Evolution: AI-Powered Insights Platform Unveils Major Updates to Satisfy Market Demand for High-Quality, Human-Centric Research

.png)

.png)

Read More

.png)

.png)

.png)

.png)

.png)

Learn More

.png)

.png)

.png)

Stay up-to date.

Stay ahead of the curve. Get it all. Or get what suits you. Our 101 material is great if you’re used to working with an agency. Are you a seasoned pro? Sign up to receive just our advanced materials.